- Mar 30, 2011 |

- 5 min read

Full source code and documentation can be found at http://pekalicious.github.io/StarPlanner/.

It's about time I shared my final year project with the world. Due to my country's military obligations, I joined the army right after I got my bachelor, which meant I did not have time to polish some of it immediately after submission. I finally had some time off, so here it is: my dissertation, StarPlanner: Demonstrating the Use of AI Planning in a Video Game.

StarPlanner is a StarCraft bot that implements a Goal-Oriented Action Planning (GOAP) agent architecture. The system includes a blackboard for subsystem communication, a working memory, and a regressive planner for decision-making.

There are two levels of planning in StarPlanner. A high-level strategic planner produces plans based on the current game state, while a low-level build planner creates the production steps needed to build or train the units required to execute that higher-level strategy.

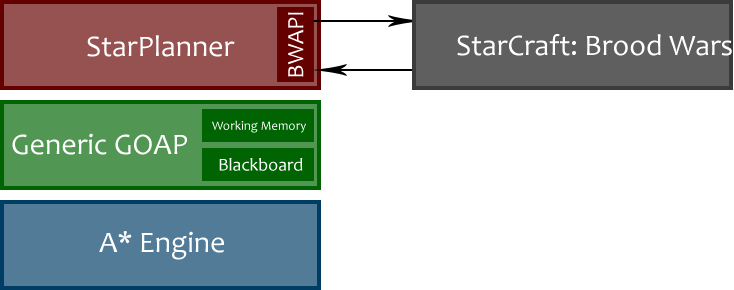

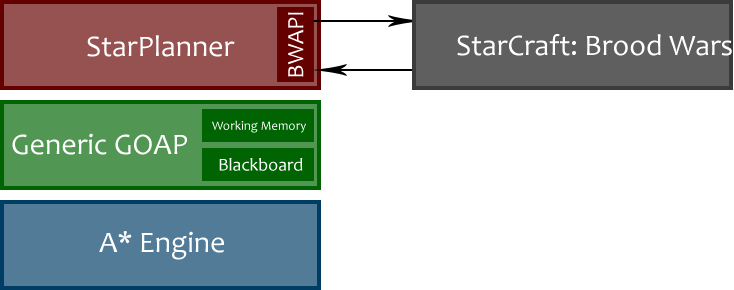

StarPlanner is written in Java and uses BWAPI and, specifically, JBridge to communicate with StarCraft. The architecture is layered: at the base there is an A* search engine; on top of that sits a generic GOAP layer that uses the search engine; and finally StarPlanner itself becomes a concrete StarCraft-specific implementation built on top of the generic planner.

StarPlanner Layers

Full source code, documentation, the full report, and the original videos as delivered to City College are available at http://pekalicious.github.io/StarPlanner/.

Post Mortem

I wish I had more time for the project and could have added many more actions for the bot to choose from. The biggest time sink early on was trying to build a 2D XNA game from scratch. That was before I discovered BWAPI in the middle of the semester. Even after switching to BWAPI, a lot of game-side functionality still had to be built, including unit control, training, upgrades, and combat behavior. Although BWSAL handled some of that in C++, it was not available in Java.

The GOAP architecture itself was made possible by Edmund Long's MSc thesis, Enhanced NPC Behavior using Goal Oriented Action Planning. Edmund implemented the architecture in C++ inside a 2D simulation. I ported the code to Java and separated it into the layers described above. That was the easy part.

The harder problem was modeling an RTS well enough to support decision-making. Planning uses a world state, a goal state, and a set of actions to produce a sequence of steps that can move the world from the current state to the desired one. Actions contain preconditions and effects. Preconditions define which properties must already be true before the action is valid. If all preconditions hold, the action can be selected, evaluated with a heuristic, and applied to change the world state.

Initially, I wanted the system to support arbitrary data types for both preconditions and effects. In that version, the world state could contain integers, booleans, or anything else. For example, a goal state could express marines = 3, and the resulting plan might be Build Barracks -> Train Marine -> Train Marine -> Train Marine. In practice, that proved more difficult than expected, so I moved to a boolean-based approach.

That simplification introduced its own tradeoffs. A precondition like haveMarines = true does not capture whether the bot has 10, 12, or 100 Marines. To work around that, I decoupled unit production from building planning. Training became the responsibility of a dedicated TrainManager, which continuously produced units, as long as resources were available, until a new plan was generated.

The bot is not, by any stretch, a strong AI opponent. It supports only a limited action set and can barely win a normal game, except when you are demonstrating it on very carefully chosen custom maps. There were two reasons for this. First, I had to build a lot of missing systems around the planner: unit movement, combat logic, ability usage, upgrades, and so on. Second, at the time, I simply was not a particularly strong StarCraft player myself.

I did, however, spend a lot of time following the StarCraft II scene, which launched right in the middle of the project, and it became very obvious how much I still had to learn about real strategy design in RTS games. A lot of that perspective came from Sean Plott's Day[9] Daily videos, which helped clarify the difference between strategy and tactics.

All of those delays also meant I missed the first StarCraft AI Competition, which had been announced shortly before I began the project. That was one missed opportunity I definitely felt.

Final Thoughts

Even though many things could have gone better, I remain very satisfied with the result. When I started the dissertation about two years earlier, planning was still a particularly exciting area within game AI, and I was proud to be working on it. I still am.

In fact, I enjoyed building StarPlanner so much that it helped solidify a professional goal: I wanted to work in the game AI industry.

After attending the first Hellenic Artificial Intelligence Summer School, I was on my way to the Paris Game AI Conference, while also building my first XNA game for Xbox Live, which would become material for a future post.

So once my military obligations ended that August, I planned to fly to the United States looking for a job. It was an exciting moment, and I meant it when I wrote it: wish me luck.